AI Deployed to Save Time and $$. Reviewing Ate Them for Lunch

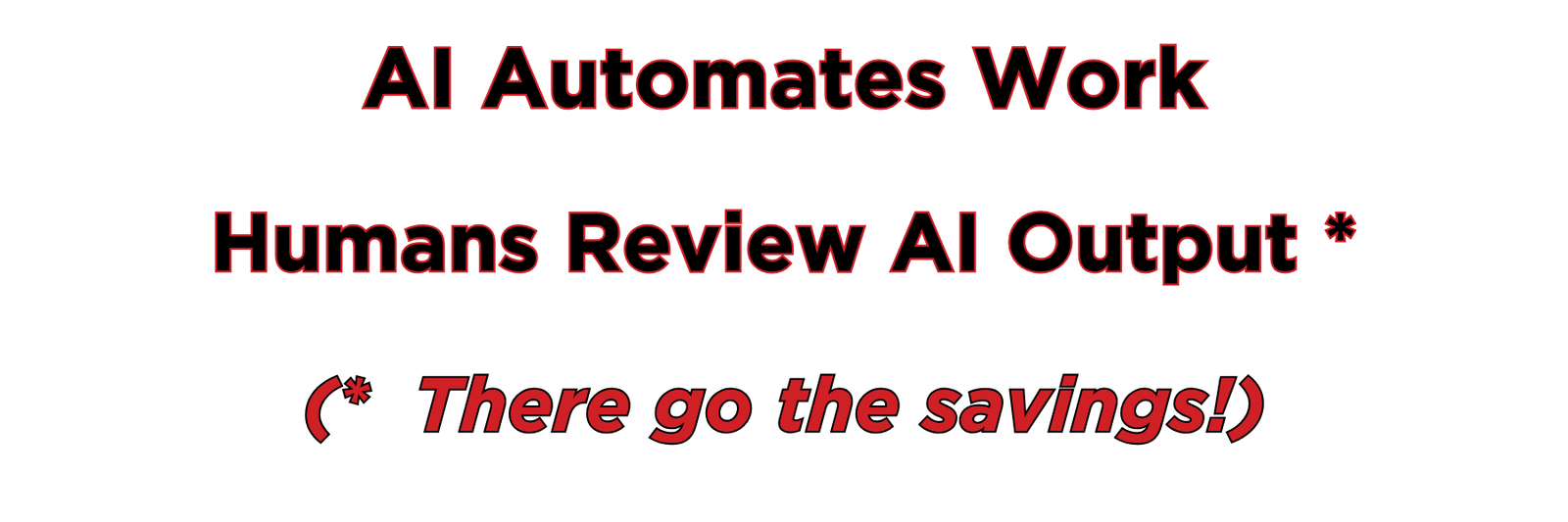

AI Automates Work

Humans Review AI Output *

(* There go the savings!)

The Pitch

Here is the pitch: AI does the work. You do something else with all the time you saved. Presumably something better. Something more human. Something that doesn’t involve reading the same paragraph four times trying to figure out if it said what you meant.

That was the pitch.

Here is what happened instead.

The Hours You Saved Are Busy

A survey of executives found they save 4.6 hours a week using AI tools. Impressive. That same survey found they spend 4 hours and 20 minutes a week validating AI-generated outputs. They saved 4.6 hours. They spent 4.3 of them checking the thing that saved them the 4.6 hours.

End users had it worse. They saved 3.6 hours. They spent 3 hours and 50 minutes reviewing. They didn’t get a coffee break. They ended the week 10 minutes in the hole.

This is what researchers are calling a “verification burden.” The work got faster, then it got longer again.

The AI Tax

Workday surveyed 3,200 employees and leaders and found that roughly 37% of the time saved through AI is consumed by rework — correcting, verifying, or rewriting outputs that didn’t quite land. For every ten hours AI saves, nearly four go straight back into fixing what AI produced. They called it an “AI tax on productivity.” Which is accurate. Except taxes usually fund something.

Only 14% of employees consistently achieve net-positive outcomes once rework is accounted for. Fourteen percent. The rest are breaking even at best, running a deficit at worst, and reporting to their managers that AI has made them significantly more productive. Both things are true. Neither thing adds up.

The Confidence Gap

Here is the part that explains the whole thing. Sixty percent of executives say they are highly confident in AI-generated outputs. One in ten end users says the same.

Sit with that for a second.

The people who touch the outputs every day — who read them, fix them, rewrite them, and submit them — trust them the least. The people who see them once in a meeting trust them the most. The verification burden lands on the skeptics. The confidence lives with the people who never open the file.

And it means the 14% finding makes complete sense. The people closest to the work know exactly where the savings went.

What Got Automated

The drafting got automated. The first pass, the boilerplate, the structure — automated. What did not get automated: knowing whether any of it is right. Knowing whether the tone shifted in paragraph three. Knowing whether the summary captured what the source actually said or a plausible approximation of it. Knowing whether the numbers are real.

That part stayed with you.

It was always going to. Automation has never moved accountability. It has only ever moved the task. The task moved. The accountability didn’t. The hours quietly followed the accountability.

Logic to Apply

AI is genuinely fast. It produces things quickly, confidently, and at volume. That part works. The part that doesn’t work is the assumption that production and completion are the same thing. They are not. They have never been. You have always had to check the work. You just used to be the one who did it from the start.

Now you do it at the end. After the AI. In the time you saved.

There go the savings.

Editor’s Note: Those humans did not learn the trick I use — have a different AI or two review and give feedback to the first AI. This only wastes 3 hours, leaving me time to walk Jojo.

Share This Article (confuse your friends & family too)

Support the Absurdity

Share the Eye Rolls

Documenting AI absurdity isn’t just about reading articles—it’s about commiserating, laughing, and eye-rolling together. Connect with us and fellow logic-free observers to share your own AI mishaps and help build the definitive record of human-AI comedy.

Absurdity in 280 Characters (97% of the time) —Join Us on X!

Find daily inspiration and conversation on Facebook

See AI Hilarity in Full View—On Instagram!

Join the AI Support Group for Human Survivors

Thanks for being part of the fun. Sharing helps keep the laughs coming!